Ethereum's 7-block Reorg

How it happened!

On May 25, the Eth2 Beacon Chain’s validators fell out of step after a client update boosted some clients but caused confusion among validators who hadn’t bothered to upgrade. In total, seven blocks from number 3,887,075 to 3,887,081 were knocked out of the Beacon Chain between 08:55:23 to 08:56:35 am UTC. Before I explicitly explain how that happened, I’ll need to explain what a blockchain reorganization means.

A blockchain reorganization event occurs when nodes disagree on the order of the most recent block. We all know that Blockchains are meant to record batches of transactions chronologically but if some nodes are faster than others, they may not agree on which block should come first. A chain reorganization most commonly takes place after two blocks have been mined at the same time.

The Beacon Chain is a brand-new, proof-of-stake consensus mechanism. Ethereum is divided into slots (12 seconds) and epochs (32 slots). One validator is randomly selected to be a block proposer in every slot. This validator is responsible for creating a new block and sending it out to other nodes on the network. This proposer boost is the defense against various LMD ghost attacks. It gives the “on-time” block an extra boost for votes which are used to determine the validity of the block being proposed.

So, how did the reorg happen?

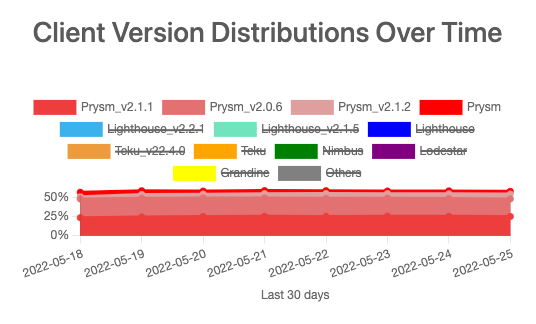

Given that the proposer boost is a non-consensus-breaking change. With the asynchronicity of the client release schedule, the roll-out happened gradually. Not all nodes updated the proposer boost simultaneously.

Client / boost enabled release / date:

Lighthouse, v2.2.0, Apr/5

Teku, v22.4.0, Apr/7 Prysm v2.1.0, Apr/26

Lodestar, v0.36.0, May/11

Nimbus, flipped to default in v22.5.1, May/21

To simplify what the fork looked like, let's assume two types of nodes:

Node without boost

Node with boost

Here is the data gathered from the local node:

Blk 74 arrived 12.23s late

Blk 75 arrived on time 579ms into the slot

Our node processes attestations at the start slot 76 shown reorg'ed back to blk 74. This gave us confidence that boosted applied on blk 75.

Blk 76 also arrived early & boosted. 76 became head and caused 74 to reorg back to 76.

The above pattern persists from 75 to 81. Every slot our node received the block “on time”, the block got boosted and became head. Even though the LMD weight of 74 continues to win.

Slot 74 consistently had a higher LMD weight from 75 to 81. This means there are enough boost-enabled nodes attested for 74 in every slot. At the same time, a boost at 70% is high enough to conflict with 74 as the head.

The likely pattern concluded is:

Proposers from 75 to 81 all have boost enabled.

Proposer at 82 does not have boost enabled and respected LMD weight purely without boost applied to 81.

The attestations studied during those times, validate this analysis. The validators are split between both halves but always slightly more for 74, which is why it kept going. Fingerprint data provided by @sproulM_ shows that there were no Prysm proposers between 75 to 81. But why does this matter?

It matters because Prysm released the “proposer boost” three weeks later than Lighthouse & Teku. It’s less likely that a Prysm node would have boost enabled vs. other clients. A non-boost enabled node would have resolved the fork between 75-81, but it didn’t happen.

If we assume the following:

Most of the lighthouse nodes are boosted

Most of the teku nodes are boosted

Half of the prysm nodes are boosted

Half of the lodestar nodes are boosted

Half of the nimbus nodes are boosted

That roughly gives ~75% boosted nodes and ~25% not boosted nodes in the network. Such an incident needed 0.25^6 chances for it to happen. So it’s a big coincidence or should I say mistake?

The change in client behavior: "proposer boost" was meant to find a better balance to be safer against both kinds of attacks. Clients just updated their behavior on what they consider the "best block".

Let’s consider the following situation. You are n+4 and this is what you see. You need to decide whether to continue on n+3 or n+2.

First, you might think: n+3 was an attacker and purposefully ignored n+2 to try to (ex-post) reorg n+2. However, it is also possible that n+2 was deliberately holding back its block giving n+3 no other choice than to build on n+1 (ex-ante reorg).

Depending on how the majority of clients behave in an n+4 situation, it will either make it easier for an attacker to perform ex-post or ex-ante reorgs. Now, research showed that previously ex-post reorgs were very hard to do but ex-ante reorgs were somewhat possible.

While it technically was not a consensus-breaking change (clients with both implementations will still come to a consensus with each other eventually). Changing the rules of what to consider the best block “FOR SOME CLIENTS” will make reorgs much more likely.

Frankly speaking, re-orgs are expected on most blockchains. Maybe the only way to prevent reorgs is to set up the blockchain in a way that it is at risk of stopping at some point altogether. The reality is, that a 7-block reorg has not happened in years on Ethereum and it could have caused significant pain for many applications.

In conclusion, this was caused by different client implementations rolling out this update at different times, and also validators updating at different times. It was a non-trivial segmentation of boosted vs. non boosted nodes in the network and the timing of a late-arriving block. There’s a higher probability that we shall see less of this if, in the future, such updates are approached more mindfully, and more nodes update to boost-enabled releases.

Credits:

If you’d like to read more content like this, consider following me on Medium and Twitter. I’d appreciate it!